Kevin Cuzner's Personal Blog

Electronics, Embedded Systems, and Software are my breakfast, lunch, and dinner.

Teensy 3.1 Bare-Metal

Introduction

A couple of weeks ago I saw a link on hackaday to an article by Karl Lunt about using the Teensy 3.1 without the Arduino IDE and building for the bare metal. I was very intrigued as the Arduino IDE was my only major beef with developing stuff for the Teensy 3.1 and I wanted to be able to do things without having to use the IDE. I read through the article and although it was geared towards windows, I decided to try to adapt it to my development style. There were a few things I wanted to do:

- No additional code dependencies other than the teensyduino installation which I already had

- Use local binaries for compilation, not the ones included with teensyduino (it just felt uncomfortable to use theirs)

- Separation of src, obj, and bin directories

- Mixture of c and cpp files in the src directory

- Not needing to explicitly list the files in the src directory to compile

- Selective inclusion of features from the teensyduino installation

I have very little experience writing more complex Makefiles. When I say "complex" I am referring to makefiles which have the src, obj, bin separation and pull in objects from multiple sources. While this may not seem complex to many people, its something I have very little experience actually doing by hand (I would normally use a generator of some sort).

I'm writing this in the hope that those without mad Makefile skills, such as myself, can liberate themselves from the Arduino IDE when developing for an awesome platform like the Teensy 3.1.

All code for this example can be found here: https://github.com/kcuzner/teensy31-blinky-bare-metal

Prerequisites

As my first order of business, I located the arm-none-eabi binaries for my linux distribution. These can also be found for Windows as noted in Karl Lunt's article. Random sidenote: I found this description of why arm-none-eabi is called arm-none-eabi. Very informative. Anyway, for those who run archlinux, the following packages are needed:

- arm-none-eabi-gcc (contains the compilers)

- arm-none-eabi-binutils (contains the linker, objdump, and other things for manipulating the binaries into hex files)

- make (we are using a makefile...)

Hopefully this gives a bit of a hint on what packages may need to be installed on other systems. For Windows, the compiler is here and make can be found here or by googling around. I haven't tested any of this on Windows and would advocate using Linux for this, but it shouldn't be hard to modify the Makefile for Windows.

My Flow

For C and C++ development I have a particular flow that I like to follow. This is heavily influenced by my usage of Code::Blocks and Visual Studio. I like to have a src directory where I put all of my sources, an include directory where I put all of my headers, an obj directory for all the obj, d, & lst files, and a bin directory for my executable output. I've always had such a hard time with raw Makefiles because I could never quite get that directory structure working. I was never quite satisfied with my feeble Makefile attempts which ended up placing the object files in the root directory where the sources had to be. This Makefile represents my first time I was ever able to actually have a real bin, obj, src structure that works.

Compiling object files to obj & looking in src for source

A working description of this can be found in the Makefile in my github repository I mentioned earlier.

Makefiles work by defining a series of "targets" which have "dependencies". Every dependency can also be the name of a target and a target may have multiple ways of being resolved (this I never realized before). So, here is the parts of the Makefile which enable searching in src for both c and cpp and doing specific actions for each, comping them into the obj directory:

1# Project C & C++ files which are to be compiled

2CPP_FILES = $(wildcard $(SRCDIR)/*.cpp)

3C_FILES = $(wildcard $(SRCDIR)/*.c)

4

5# Change project C & C++ files into object files

6OBJ_FILES := $(addprefix $(OBJDIR)/,$(notdir $(CPP_FILES:.cpp=.o))) $(addprefix $(OBJDIR)/,$(notdir $(C_FILES:.c=.o)))

7

8# Example build target

9build: $(OUTPUTDIR)/$(PROJECT).elf

10

11# Linker invocation

12$(OUTPUTDIR)/$(PROJECT).elf: $(OBJ_FILES)

13 @mkdir -p $(dir $@)

14 $(CC) $(OBJ_FILES) $(LDFLAGS) -o $(OUTPUTDIR)/$(PROJECT).elf

15

16# C file compilation for some object file

17$(OBJDIR)/%.o : $(SRCDIR)/%.c

18 @echo Compiling $<, writing to $@...

19 @mkdir -p $(dir $@)

20 $(CC) $(GCFLAGS) -c $< -o $@ > $(basename $@).lst

21

22# C++ file compilation for some object file

23$(OBJDIR)/%.o : $(SRCDIR)/%.cpp

24 @mkdir -p $(dir $@)

25 @echo Compiling $<, writing to $@...

26 $(CC) $(GCFLAGS) -c $< -o $@

Each section above has a specific purpose and the order can be rather important. The first part uses $(wildcard ...) to pick up all of the C++ and C files. The CPP_FILES variable, for example, will become "src/file1.cpp src/file2.cpp src/etc.cpp" if we had "file1.cpp", "file2.cpp" and "etc.cpp" in the src directory. Similarly, the C_FILES would pick up any files in src with a c file extension. Next, the filenames are transformed into object filenames living in the obj directory. This is done by first changing the file extension of the files to .o using the $(CPP_FILES:.cpp=.o) or $(C_FILES:.c=.o) syntax. However, these files still look like they are in the src directory (e.g. src/file1.o) so the directory is next stripped off each file using $(nodir...). Removing the directory doesn't allow for a nested src directory, but that wasn't one of our objectives here. At this point, the files are just names with no directories (e.g. file1.o) and so the last step is to change them to live in the obj directory using $(addprefix $(OBJDIR)/,..). This completes our transformation, populating OBJ_FILES to look like "obj/file1.o obj/file2.o" etc.

The next part is where we take that list of object files and use them as dependencies for a target. Targets are defined by <target name>: <dependency list> followed by a list of commands to execute after resolving the dependencies. IMPORTANT: The list of commands needs to be indented by a tab (t) character. Spaces will not work (it will say something like "missing separator" with a line number). A target is anything that we pass into make. The default target is 'all'. The "dependencies" are files which much be "up to date" before the target is run.

In our example, we use $(OBJ_FILES) as a dependency of "$(OUTPUTDIR)/$(PROJECT).elf" which is required as a dependency of "build". This tells make that when we run "make build", it needs to try to resolve the dependency of "bin/<project>.elf" which in turn needs to resolve "obj/file1.o", "obj/file2.o", and "obj/etc.o" (going from our example in the previous paragraph). This is where the next couple targets come in. A target will only be executed if it can find some rule to resolve all of the dependencies. We will use "obj/file1.o" as an example here. There are 2 targets with that name, actually: "$(OBJDIR)/%.o: $(SRCDIR)/%.c" and "$(OBJDIR)/%.o: $(SRCDIR)/%.cpp". It would be good to note that the target names here the exact same even though the dependencies are different. Now, how does "$(OBJDIR)%.o" match "obj/file1.o"? A Makefile does something called "pattern matching" when the % sign is used. It says "match something that looks like $(OBJDIR)<some file>.o" which our "obj/file1.o" happens to match. The cool part is that once the target name is resolved using a %, the dependencies get to use % to substitute the exact same thing. Thus, our % here is "file1", so it follows that its dependency must be "$(SRCDIR)/file1.c". Now, our example used "file1.cpp", not "file1.c" and this is where defining multiple targets with the same names but different dependencies comes in. A target will only be executed if the dependencies can be resolved to either an actual file and/or another target. Our first target won't be a match since it says that the source file should be a C file. So, it goes to the next target that matches the name which has a dependency of "$(SRCDIR)/file1.cpp". This one matches, and so commands following that target are executed.

When executing a target ("$(OBJDIR)/%.o: $(SRCDIR)/%.cpp" in our example), there are some special variables which are available for use. These are described here, but I will discuss two important ones that I used: $@ and $<. $@ is the name of the target (so, "obj/file.o" in our case) and $< is the name of the first dependency ("src/file.cpp" in our case). This lets us pass these arguments into the commands that we execute. Our Makefile will first create the obj directory by calling "mkdir -p $(dir $@)" which is translated into "mkdir -p obj" since $(dir $@) will give us "obj". Next, we actually compile the $< (which is translated to "src/file.cpp"), outputting it to $< which is translated to "obj/file.o".

Outputting everything to bin

Compared to the pattern matching and multiple target definitions that we discussed above, this is comparatively simple. We simply get to prefix all of our "binary" output files with some directory which is set as $(OUTPUTDIR) in my Makefile. Here is an example:

1all:: $(OUTPUTDIR)/$(PROJECT).hex $(OUTPUTDIR)/$(PROJECT).bin stats dump

2

3$(OUTPUTDIR)/$(PROJECT).bin: $(OUTPUTDIR)/$(PROJECT).elf

4 $(OBJCOPY) -O binary -j .text -j .data $(OUTPUTDIR)/$(PROJECT).elf $(OUTPUTDIR)/$(PROJECT).bin

5

6$(OUTPUTDIR)/$(PROJECT).hex: $(OUTPUTDIR)/$(PROJECT).elf

7 $(OBJCOPY) -R .stack -O ihex $(OUTPUTDIR)/$(PROJECT).elf $(OUTPUTDIR)/$(PROJECT).hex

8

9# Linker invocation

10$(OUTPUTDIR)/$(PROJECT).elf: $(OBJ_FILES)

11 @mkdir -p $(dir $@)

12 $(CC) $(OBJ_FILES) $(LDFLAGS) -o $(OUTPUTDIR)/$(PROJECT).elf

13

14stats:

15

16dump:

We see here that any output that we are creating as a result of the compilation (.elf, .hex, .bin) is going to end up in $(OUTPUTDIR). Futher, we see that our "all" target asks the Makefile to create both a bin file and a hex file along with two other targets called "stats" and "dump". These are just scripts that execute the "size" and "objdump" commands on our bin file.

Using Teensyduino without compiling everything

This was by far the most frustrating part to get working. Everything about the makefiles was readily available online, with some serious googling. However, getting things to actually compile was a little different story.

The thing that makes this complex is the fact that it seems the Teensyduino libraries were not designed to be used independently of each other. I will cover, in order, what steps I had to take in order to get this to work.

The most important file we need is called "mk20dx128.c". This sets up a lot of things relating to interrupts along with the Phase Lock Loop (PLL) which controls the speed of the Teensy's processor. Without this configuration, we don't get interrupts and the processor runs at a pitiful 16Mhz. The only problem is that "mk20dx128" references a few functions that are either part of the standard library and not used often (making them difficult to search for) or are defined in other files, increasing our dependency count.

My first mistake was explicitly using the linker to link all of my object files (wait...aren't we supposed to use the linker? Read on.). Since arm-none-eabi is not dependent on a specific architecture, it doesn't know which standard library (libc) to use. This results in an undefined reference to "__libc_init_array()", a function used during the initialization phase of a program which is not often invoked in code outside the standard library itself. mk20dx128.c uses this function in its custom startup code which prepares the processor for running our program. To solve this, I wanted to tell the linker that I was using a cortex-m4 cpu so that it would know which libc to include and thereby resolve the reference. However, this proved difficult to do when directly invoking the linker. Instead, I took a hint from the Makefile that comes with Teensyduino and used the following command to link the objects:

1$(CC) $(OBJ_FILES) $(LDFLAGS) -o $(OUTPUTDIR)/$(PROJECT).elf

Which more or less translates to (using our example from earlier):

1arm-none-eabi-gcc obj/file1.o obj/file2.o obj/etc.o obj/mk20dx128.o $(LDFLAGS) -o bin/$(PROJECT).elf

We would have thought that we should be using arm-none-eabi-ld instead of arm-none-eabi-gcc. However, by using arm-non-eabi-gcc I was able to pass the argument "-mcpu=cortex-m4" which then allowed GCC to instruct the linker which standard library to use. Wonderful, right? So all of our problems are solved? Not yet.

The next thing is that mk20dx128.c has a lot of external dependencies. It uses a function defined in pins_teensy.c which in turn requires functions defined in both analog.c and usb_dev.c which opens another can of worms. Ugh. I didn't want this many dependencies and I couldn't see a way to escape compiling nearly the entire Teensyduino library just to run my simple blinking program. Then, it dawned on me: I could use the same technique that mk20dx128.c uses to define its ISRs to "define" the functions that pins_teensy.c was calling that I didn't really want. So, I made a file called "shim.c" which contained the following:

1void unused_void(void) { }

2

3void usb_init(void) __attribute__ ((weak, alias("unused_void")));

I decided that I would include "yield.c" and "analog.c" since those weren't too big. This left just the usb stuff. The only function that was actually called from pins_teensy.c was "usb_init". What the above statement says to the compiler is "I am defining usb_init(void) here (which points to unused_void(void)) unless you find another definition of usb_init(void) somewhere". The "weak" attribute makes this "strong" symbol of usb_init a "weak" symbol reference to which is basically the same as just making a declaration (in contrast to the definition a function, which is usually a strong reference). Sidenote: A program can have any number of weak symbol references to a specific function/variable (declarations), but only one strong symbol reference (definition) of that function/variable. The "alias" attribute allows us to say "when I say usb_init I really mean unused_void". The end result of this is that if nobody defines usb_init(void) anywhere, as would be situation if I were to decide not to include usb_dev.c, any calls to usb_init(void) will actually call unused_void(void). However, if somebody did define usb_init(void), my definition of usb_init would be ignored in favor of using their definition. This lets me include usb support in the future if I wanted to. Isn't that cool? That fixed all of my reference issues and let me actually build the project.

Conclusion

Armed with my new Makefile and a better understanding of how the Teensy 3.1 works from a software perspective, I managed to compile and upload my "blinky" program which just blinks the onboard LED (pin 13) on and off every 1/4 second. The overall program size was 3% of the total space, which is much more reasonable compared to the 10-20% it was taking when compiled using the Arduino IDE.

Again, all files from this escapade can be found here: https://github.com/kcuzner/teensy31-blinky-bare-metal

First thoughts on the Teensy 3.1

Wow it has been a while; I have not written since August.

I entered a contest of sorts this past week which involves building an autonomous turret which uses an ultrasonic sensor to locate a target within 10 feet and fire at it with a tiny dart gun. The entire assembly is to be mounted on servos. This is something my University is doing as an extra-curricular for engineers and so when a friend of mine asked if I wanted to join forces with him and conquer, I readily agreed.

The most interesting part to me, by far, is the processor to be used. It is going to be a Teensy 3.1:

This board contains a Freescale ARM Cortex-M4 microcontroller along with a smaller non-user-programmable microcontroller for assistance in the USB bootloading process (the exact details of that interaction are mostly unknown to me at the moment). I have never used an ARM microcontroller before and never a microcontroller with as many peripherals as this one has. The datasheet is 1200 pages long and is not really even being very verbose in my opinion. It could easily be 3000 pages if they included the level of detail usually included in AVR and PIC datasheets (code examples, etc). The processor runs at 96Mhz as well, making it the most powerful embedded computer I have used aside from my Raspberry Pi.

The Teensy 3.1 is Arduino-compliant and is designed that way. However, it can also be used without the Arduino software. I have not used an Arduino before since I rather enjoy using microcontrollers in a bare-bones fashion. However, it is become increasingly more difficult for me to be able to experiment with the latest in microcontroller developments using breadboards since the packages are becoming increasingly more surface mount.

The Arduino IDE

Oh my goodness. Worst ever. Ok, not really, but I really have a hard time justifying using it other than the fact that it makes downloading to the Teensy really easy. This post isn't meant to be a review of the arduino IDE, but the editor could use some serious improvements IMHO:

- Tab indentation level: Some of us would like to use something other than 2 spaces, thank you very much. We don't live in the 70's where horizontal space is at a premium and I prefer 4 spaces. Purely personal preference, but I feel like the option should be there

- Ability to reload files: The inability to reload the files and the fact that it seems to compile from a cache rather than from the file itself makes the arduino IDE basically incompatible with git or any other source control system. This is a serious problem, in my opinion, and requires me to restart the editor frequently whenever I check out a different branch.

- Real project files: I understand the aim for simplicity here, but when you have a chip with 256Kb of flash on it, your program is not going to be 100 lines and fit into one file. At the moment, the editor just takes everything in the directory and compiles it by file extension. No subdirectories and every file will be displayed as a separate tab with no way to close it. I am in the habit of separating my source and not having the ability to structure my files how I please really makes me feel hampered. To make matters worse, the IDE saves the original sketch file (which is just a cpp file that will be run through their preprocessor) with its own special file extension (*.ino) which makes it look like it should be a project file, but in reality it is not.

There are few things I do like, however. I do like their library of things that make working with this new and foreign processor rather easy. I also like that their build system is very cross-platform and easy to use.

First impression of the processor

I must first say that the level of work that has gone into the surrounding software (the header files, the teensy loader, etc) truly shows and makes it a good experience to use the Teensy, even if the Arduino IDE sucks. I tried a Makefile approach using Code::Blocks, but it was difficult for me to get it to compile cross-platform and I was afraid that I would accidentally overwrite some bootloader code that I hadn't known about. So, I ended up just going with the Ardiuno IDE for safety reasons.

The peripherals on this processor are many and it is hard at times to figure out basic functions, such as the GPIO. The manual for the peripherals is in the neighborhood of 60 chapters long, with each chapter describing a peripheral. So far, I have messed with just the GPIOs and pin interrupts, but I plan on moving on to the timer module very soon. This project likely won't require the DMA or the variety of onboard bus modules (CAN, I2C, SPI, USB, etc), but in the future I hope to have a Teensy of my own to experiment on. The sheer number of registers combined with the 32-bit width of everything is a totally new experience for me. Combine that with the fact that I don't have to worry as much about the overhead of using certain C constructs (struct and function pointers for example) and I am super duper excited about this processor. Tack on the stuff that PJRC created for using the Teensy such as the nice header files and the overall compatibility with some Arduino libraries, and I have had an easier time getting this thing to work than with most of my other projects I have done.

Conclusion

Although the Teensy is for a specific contest project right now, at the price of $19.80 for the amount of power that it gives, I believe I will buy one for myself to mess around with. I am looking forward to getting more familiar with this processor and although I resent the IDE I have to work with at the moment, I hope that I will be able to move along to better compilation options that will let me move away from the arduino IDE.

Pop 'n Music controller...AVR style

Every time I do one of these bus emulation projects, I tell myself that the next time I do it I will use an oscilloscope or DLA. However, I never actually break down and just buy one. Once more, I have done a bus emulation project flying blind. This is the harrowing tale:

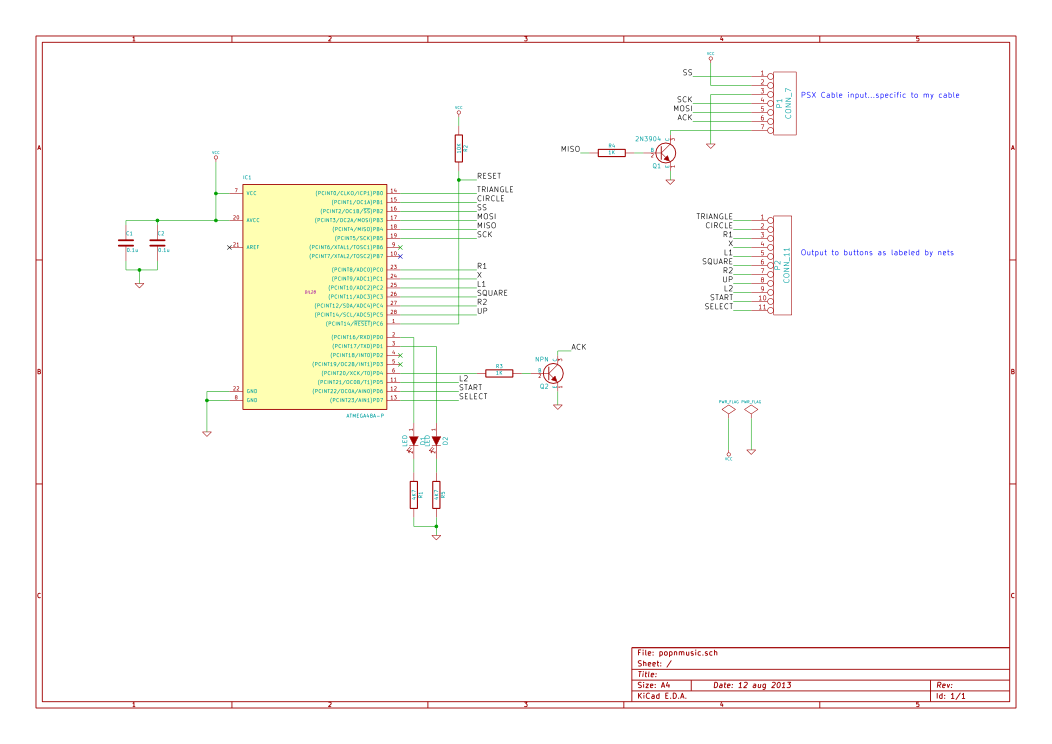

Code & Schematics (kicad): https://github.com/kcuzner/pop-n-music-controller

Introduction

A couple of days ago, I was asked to help do some soldering for a modification someone was trying to do to a PS1 controller. He informed me that it was for the game Pop 'n Music and that it required a special controller to be played properly. Apparently, official controllers can sell for $100 or more, so modifying an existing controller was the logical thing to do. After much work and pain, it was found that while modifying an existing controller was easy, it wasn't very robust and could easily fall apart and so I built one using an ATMega48 and some extra components I had lying around. The microcontroller emulates the PSX bus which is used to communicate between the controller and the playstation/computer. As my reference for the bus, I used the following two web pages:

- http://emu-docs.org/PlayStation/psxcont/ - Schematics and details on the electrical properties of the bus

- http://store.curiousinventor.com/guides/PS2/ - Really good list of commands that the playstation asks of the controller and how to respond. More accurate that the previous site when talking about the commands.

The complete schematics and software can be found on my github.

The first attempt: Controller mod

The concept behind the controller mod was simple: Run wires from the existing button pads to some arcade-style buttons arranged in the pattern needed for the controller. It worked well at first, but after a little while we began to have problems:

- The style of pad that he purchased had conductive rubber covering all of the copper for the button landings. In order to solder to this, it was necessary to scrape off the rubber. This introduced a tendency for partially unclean joints, giving rise to cold connections. While with much effort I was able to mitigate this issue (lots of scraping and cleaning), the next problem began to manifest itself.

- The copper layout for each button pad was of a rather minimalist design. While some pads shown online had nice large areas for the button to contact, this particular controller had 50-100 mil lines arranged in a circular pattern rather than one huge land. While I imagine this is either economical or gives better contact, it sure made soldering wires onto it difficult. I would get the wire soldered, only to have it decide that it wanted to come off and take the pad with it later. This was partly due to bad planning on my part and using wire that wasn't flexible enough, but honestly, the pads were not designed to be soldered to.

- With each pad that lifted, the available space for the wires on certain buttons to be attached to began to become smaller and smaller. Some buttons were in the large land style and were very easy to solder to and the joints were strong (mainly the arrow pad buttons). The issue was with the start and select buttons (very narrow) and the X, square, triangle, and O buttons (100mil spiral thing mentioned earlier). Eventually, I was resorting to scraping the solder mask off and using 30awg wire wrapping wire to solder to the traces. It just got ridiculous and wasn't nearly strong enough to hold up as a game controller.

- In order for the controller to be used with a real playstation, rather than an emulator, the Left, Right, and Down buttons had to be pressed at the same time to signify to the game that it was a Pop 'n Music controller. Emulators generally can't handle this sort of behavior when mapping the buttons around, so putting a switch was considered. However, any reliable switch (read: Nice shiny toggle switch) was around $3. Given the low cost aim of this project, it was becoming more economical to explore other options

So, we began exploring other options. I found this site detailing emulation of these controllers using either 74xx logic or a microcontroller. It is a very good resource, and is mostly correct about the protocol. After looking at the 74xx logic solution and totaling up the cost, I noticed that my $1.75 microcontroller along with the required external components would actually come out to be cheaper than buying 4 chips and sockets for them. Even better, I already had a microcontroller and all the parts on hand, so there was no need for shipping. So, I began building and programming.

AVR PSX Bus Emulation: The Saga of the Software

PSX controllers communicate using a bus that has a clock, acknowledge, slave select, psx->controller (command) line, and controller->psx (data) line. Yes, this looks a lot like an SPI bus. In fact, it is more or less identical to a SPI Mode 3 bus with the master-in slave-out line driven open collector. I failed to notice this fact until later, much to my chagrin. Communication is accomplished using packets that have a start signal followed by a command and waiting for a response from the controller. During the transaction, the controller declares its type, the number of words that it is going to send, and the actual controller state. I was emulating a standard digital controller, so I had to tell it that my controller type was 0x41, which is digital with 1 word data. Then, I had to send a 0x5A (start data response byte) and two bytes of button data. My initial approach involved writing a routine in C that would handle pin changes on INT0 and INT1 which would be connected to the command and clock lines. However, I failed to anticipate that the bus would be somewhere in the neighborhood of 250Khz-500Khz and this caused some serious performance problems and I was unable to complete a transaction with the controller host. So, I decided to try writing the same routine in assembly to see if I could squeeze every drop of performance out of it possible. I managed to actually get it to complete a transaction this way, but without sending button data. To make matters worse, every once in a while it would miss a transaction and this was quite noticeable when I made an LED change state with every packet received. It was very inconsistent and that was without even sending button data. I eventually realized the problem was with the fact that making the controller do so much between cycles of the clock line actually caused it to miss bits. So, I looked at the problem again. I noticed that the ATMega48A had an SPI module and that the PSX bus looked similar, but not exactly like, an SPI bus. However, running the bus in mode 3 with the data order reversed and the MISO driving the base of a transistor operating in an open-collector fashion actually got me to be able to communicate to the PSX bus on almost the first try. Even better, the only software change that had to be made was inverting the data byte so that the signal hitting the base of the transistor would cause the correct changes on the MISO line. So, I hooked up everything as follows:

After doing that, suddenly I got everything to work. It responded correctly to the computer when asked about its inputs and after some optimization, stopped skipping packets due to taking too much time processing button inputs. It worked! Soon after getting the controller to talk to the computer, I discovered an error in the website I mentioned earlier that detailed the protocol. It mentioned that during transmission of the data about the buttons that the control line was going to be left high. While its a minor difference, I thought I might as well mention this site, which lists the commands correctly and was very helpful. As I mentioned before, one problem that was encoutered was that in order for the controller to be recognized as a pop-n-music controller by an actual playstation, the left, right, and down buttons must be pressed. However, it seems that the PSX->USB converter that we were using was unable to handle having those 3 pressed down at once. So, there needed to be a mode switch. The way for switching modes I came up with was to hold down both start and select at the same time for 3 seconds. After the delay, the modes would switch. The UI interaction for this is embodied in two LEDs. One LED is lit for when it is in PSX mode and the other when it is in emulator mode. When both buttons are pressed, both LEDs light up until the one for the previous mode shuts off. At first, I had the mode start out every time the controller was started in the same mode, no matter what the previous mode was before it was shut off. It soon became apparent that this wouldn't do, and so I looked in to using the EEPROM to store the flag value I was using to keep the state of the controller. Strangely, it worked on the first try, so the controller will stay in the same mode from the last time it was shut off. My only fear is that switching the mode too much could degrade the EEPROM. However, the datasheet says that it is good for 100,000 erase/write cycles, so I imagine it would be quite a while before this happens and other parts of the controller will probably fail first (like the switches).

On to the hardware!

I next began assembly. I went the route of perfboard with individual copper pads around each hole because that's what I have. Here are photos of the assembly, sadly taken on my cell phone because my camera is broken. Sorry for the bad quality...

Conclusion

So, with the controller in the box and everything assembled, it seems that all will be well with the controller. It doesn't seem to miss keypresses or freeze and is able to play the game without too many hiccups (the audio makes it difficult, but that's just a emulator tweaking issue). The best part about this project is that in terms of total work time, it probably took only about 16 hours. Considering that most of my projects take months to finish, this easily takes the cake as one of my quickest projects start to finish.

Raspberry Pi as an AVR Programmer

Introduction

Recently, I got my hands on a Raspberry Pi and one of the first things I wanted to do with it was to turn it into my complete AVR development environment. As part of that I wanted to make avrdude be able to program an AVR directly from the Raspberry Pi with no programmer. I know there is this linuxgpio programmer type that was recently added, but it is so recent that it isn't yet included in the repos and it also requires a compile-time option to enable it. I noticed that the Raspberry Pi happens to expose its SPI interface on its expansion header and so I thought to myself, "Why not use this thing instead of bitbanging GPIOs? Wouldn't that be more efficient?" Thus, I began to decipher the avrdude code and write my addition. My hope is that things like this will allow the Raspberry Pi to be used to explore further embedded development for those who want to get into microcontrollers, but blew all their money on the Raspberry Pi. Also, in keeping with the purpose that the Raspberry Pi was originally designed for, using it like this makes it fairly simple for people in educational surroundings to expand into different aspects of small computer and embedded device programming.

As my addition to avrdude, I created a new programmer type called "linuxspi" which uses the userspace SPI drivers available since around Linux ~2.6 or so to talk to a programmer. It also requires an additional GPIO to operate as the reset. My initial thought was to use the chip select as the reset output, but sadly, the documentation for the SPI functions mentioned that the chip enable line is only held low so long as the transaction is going. While I guess I could compress all the transactions avrdude makes into one giant burst of data, this would be very error prone and isn't compatible with avrdude's program structure. So, the GPIO route was chosen. It just uses the sysfs endpoints found in /sys/class/gpio to manipulate a GPIO chosen in avrdude.conf into either being in a hi-z input state or an output low state. This way, the reset can be connected via a resistor to Vcc and then the Raspberry Pi just holds reset down when it needs to program the device. Another consequence which I will mention here of choosing to use the Linux SPI drivers is that this should actually be compatible with any Linux-based device that exposes its SPI or has an AVR connected to the SPI; not just the Raspberry Pi.

Usage

So, down to the nitty gritty: How can I use it? Well, at the moment it is in a github repository at https://github.com/kcuzner/avrdude. As with any project that uses the expansion header on the Raspberry Pi, there is a risk that a mistake could cause your Raspberry Pi to die (or let out the magic smoke, so to speak). I assume no responsibility for any damage that may occur as a result of following these directions or using my addition to avrdude. Just be careful when doing anything involving hooking stuff up to the expansion port and use common sense. Remember to measure twice and cut once. So, with that out of the way, I will proceed to outline here the basic steps for installation and usage.

Installation

The best option here until I bother creating packages for it is to do a git clone directly into a directory on the Raspberry Pi and build it from there on the Raspberry Pi itself. I remember having to install the following packages to get it to compile (If I missed any, let me know):

- bison

- autoconf

- make

- gcc

- flex

Also, if your system doesn't have a header at "linux/spi/spidev.h" in your path, you probably need to install that driver. I was using Arch Linux and it already had the driver there, so for all I know its always installed. You also should take a look to make sure that "/dev/spidev0.0" and "/dev/spidev0.1" or something like that exist. Those are the sort of endpoints that are to be used with this. If they do not exist, try executing a "sudo modprobe spi_bcm2708". If the endpoints still aren't there after that, then SPI support probably isn't installed or enabled for your kernel.

After cloning the repo and installing those packages, run the "./boostrap" script which is found in the avrdude directory. This will run all the autoconf things and create the build scripts. The next step is to run "./configure" and wait for it to complete. After the configure script, it should say whether or not "linuxspi" is enabled or disabled. If it is disabled, it was not able to find the header I mentioned before. Then run "make" and wait for it to complete. Remember that the Raspberry Pi is a single core ARM processor and so building may take a while. Afterwards, simply do "sudo make install" and you will magically have avrdude installed on your computer in /usr/local. It would probably be worthwhile to note here that you probably want to uninstall any avrdude you may have had installed previously either manually or through a package manager. The one here is built on top of the latest version (as of May 26th, 2013), so it should work quite well and be all up to date and stuff for just using it like a normal avrdude. I made no changes to any of the programmer types other than the one I added.

To check to see if the avrdude you have is the right one, you should see an output similar to the following if you run this command (tiny-tim is the name of my Raspberry Pi until I think of something better):

1kcuzner@tiny-tim:~/avrdude/avrdude$ avrdude -c ?type

2

3Valid programmer types are:

4 arduino = Arduino programmer

5 avr910 = Serial programmers using protocol described in application note AVR910

6 avrftdi = Interface to the MPSSE Engine of FTDI Chips using libftdi.

7 buspirate = Using the Bus Pirate's SPI interface for programming

8 buspirate_bb = Using the Bus Pirate's bitbang interface for programming

9 butterfly = Atmel Butterfly evaluation board; Atmel AppNotes AVR109, AVR911

10 butterfly_mk = Mikrokopter.de Butterfly

11 dragon_dw = Atmel AVR Dragon in debugWire mode

12 dragon_hvsp = Atmel AVR Dragon in HVSP mode

13 dragon_isp = Atmel AVR Dragon in ISP mode

14 dragon_jtag = Atmel AVR Dragon in JTAG mode

15 dragon_pdi = Atmel AVR Dragon in PDI mode

16 dragon_pp = Atmel AVR Dragon in PP mode

17 ftdi_syncbb = FT245R/FT232R Synchronous BitBangMode Programmer

18 jtagmki = Atmel JTAG ICE mkI

19 jtagmkii = Atmel JTAG ICE mkII

20 jtagmkii_avr32 = Atmel JTAG ICE mkII in AVR32 mode

21 jtagmkii_dw = Atmel JTAG ICE mkII in debugWire mode

22 jtagmkii_isp = Atmel JTAG ICE mkII in ISP mode

23 jtagmkii_pdi = Atmel JTAG ICE mkII in PDI mode

24 jtagice3 = Atmel JTAGICE3

25 jtagice3_pdi = Atmel JTAGICE3 in PDI mode

26 jtagice3_dw = Atmel JTAGICE3 in debugWire mode

27 jtagice3_isp = Atmel JTAGICE3 in ISP mode

28 linuxgpio = GPIO bitbanging using the Linux sysfs interface (not available)

29 linuxspi = SPI using Linux spidev driver

30 par = Parallel port bitbanging

31 pickit2 = Microchip's PICkit2 Programmer

32 serbb = Serial port bitbanging

33 stk500 = Atmel STK500 Version 1.x firmware

34 stk500generic = Atmel STK500, autodetect firmware version

35 stk500v2 = Atmel STK500 Version 2.x firmware

36 stk500hvsp = Atmel STK500 V2 in high-voltage serial programming mode

37 stk500pp = Atmel STK500 V2 in parallel programming mode

38 stk600 = Atmel STK600

39 stk600hvsp = Atmel STK600 in high-voltage serial programming mode

40 stk600pp = Atmel STK600 in parallel programming mode

41 usbasp = USBasp programmer, see http://www.fischl.de/usbasp/

42 usbtiny = Driver for "usbtiny"-type programmers

43 wiring = http://wiring.org.co/, Basically STK500v2 protocol, with some glue to trigger the bootloader.

Note that right under "linuxgpio" there is now a "linuxspi" driver. If it says "(not available)" after the "linuxspi" description, "./configure" was not able to find the "linux/spi/spidev.h" file and did not compile the linuxspi programmer into avrdude.

Configuration

There is a little bit of configuration that happens here on the Raspberry Pi side before proceeding to wiring it up. You must now decide which GPIO to sacrifice to be the reset pin. I chose 25 because it is next to the normal chip enable pins, but it doesn't matter which you choose. To change which pin is to be used, you need to edit "/usr/local/etc/avrdude.conf" (it will be just "/etc/avrdude.conf" if it wasn't built and installed manually like above). Find the section of the file that looks like so:

1programmer

2 id = "linuxspi";

3 desc = "Use Linux SPI device in /dev/spidev*";

4 type = "linuxspi";

5 reset = 25;

6;

The "reset = " line needs to be changed to have the number of the GPIO that you have decided to turn into the reset pin for the programmer. The default is 25, but that's just because of my selfishness in not wanting to set it to something more generic and having to then edit the file every time I re-installed avrdude. Perhaps a better default would be "0" since that will cause the programmer to say that it hasn't been set up yet.

Wiring

After setting up avrdude.conf to your desired configuration, you can now connect the appropriate wires from your Raspberry Pi's header to your microchip. A word of extreme caution: The Raspberry Pi's GPIOs are NOT 5V tolerant, and that includes the SPI pins . You must do either one of two things: a) Run the AVR and everything around it at 3.3V so that you never see 5V on ANY of the Raspberry Pi pins at any time (including after programming is completed and the device is running) or b) Use a level translator between the AVR and the SPI. I happen to have a level translator lying around (its a fun little TSSOP I soldered to a breakout board a few years back), but I decided to go the 3.3V route since I was trying to get this thing to work. If you have not ever had to hook up in-circuit serial programming to your AVR before, perhaps this would be a great time to learn. You need to consult the datasheet for your AVR and find the pins named RESET (bar above it), MOSI, MISO, and SCK. These 4 pins are connected so that RESET goes to your GPIO with a pullup resistor to the Vcc on your AVR, MOSI goes to the similarly named MOSI on the Raspberry Pi header, MISO goes to the like-named pin on the header, and SCK goes to the SPI clock pin (named SCLK on the diagram on elinux.org). After doing this and double checking to make sure 5V will never be present to the Raspberry Pi , you can power on your AVR and it should be able to be programmed through avrdude. Here is a demonstration of me loading a simple test program I made that flashes the PORTD LEDs:

1kcuzner@tiny-tim:~/avrdude/avrdude$ sudo avrdude -c linuxspi -p m48 -P /dev/spidev0.0 -U flash:w:../blink.hex

2[sudo] password for kcuzner:

3

4avrdude: AVR device initialized and ready to accept instructions

5

6Reading | ################################################## | 100% 0.00s

7

8avrdude: Device signature = 0x1e9205

9avrdude: NOTE: "flash" memory has been specified, an erase cycle will be performed

10 To disable this feature, specify the -D option.

11avrdude: erasing chip

12avrdude: reading input file "../blink.hex"

13avrdude: input file ../blink.hex auto detected as Intel Hex

14avrdude: writing flash (2282 bytes):

15

16Writing | ################################################## | 100% 0.75s

17

18avrdude: 2282 bytes of flash written

19avrdude: verifying flash memory against ../blink.hex:

20avrdude: load data flash data from input file ../blink.hex:

21avrdude: input file ../blink.hex auto detected as Intel Hex

22avrdude: input file ../blink.hex contains 2282 bytes

23avrdude: reading on-chip flash data:

24

25Reading | ################################################## | 100% 0.56s

26

27avrdude: verifying ...

28avrdude: 2282 bytes of flash verified

29

30avrdude: safemode: Fuses OK

31

32avrdude done. Thank you.

There are two major things to note here:

- I set the programmer type (-c option) to be "linuxspi". This tells avrdude to use my addition as the programming interface

- I set the port (-P option) to be "/dev/spidev0.0". On my Raspberry Pi, this maps to the SPI bus using CE0 as the chip select. Although we don't actually use CE0 to connect to the AVR, it still gets used by the spidev interface and will toggle several times during normal avrdude operation. Your exact configuration may end up being different, but this is more or less how the SPI should be set. If the thing you point to isn't an SPI device, avrdude should fail with a bunch of messages saying that it couldn't send an SPI message.

Other than that, usage is pretty straightforward and should be the same as if you were using any other programmer type.

Future

As issues crop up, I hope to add improvements like changing the clock frequency and maybe someday adding TPI support (not sure if necessary since this is using the dedicated SPI and as far as I know, TPI doesn't use SPI).

I hope that those using this can find it helpful in their fun and games with the Raspberry Pi. If there are any issues compiling and stuff, either open an issue on github or mention it in the comments here.

Lessons in game design...in a car!

For the greater part of this week I have been trapped in a car driving across the United States for my sister's wedding. Of course, I had my trusty netbook with me and so I decided to do some programming. After discovering that I had unwittingly installed tons of documentation in my /usr/share/doc folder (don't you love those computer explorations that actually reveal something?), I started building yet another rendition of Tetris in Python. I did several things differently this time and the code is actually readable and I feel like some structure things I learned/discovered/re-discovered should be shared here.

Game State Management

One problem that has constantly plagued my weak attempts at games has been an inability to do menus and manage whether the game is paused or not in a reasonably simple manner. Of course, I could always hack something together, but I could also write the whole thing in assembly language or maybe write it all as one big function in C. The issue is that if I program myself into a hole, I usually end up giving up a bit unless I am getting paid (then I generally bother thinking before programming so that I don't waste people's time). After finishing the game logic and actually getting things to work with the game itself, I remembered a funny tutorial I was once doing for OGRE which introduced a very simple game engine. The concept was one of a "stack" of game states. Using this stack, your main program basically injects input into and then asks a rendering from the state on the top of the stack. The states manipulate the stack, pushing a new state or popping themselves. When the stack is empty, the program exits.

My game state stack works by using an initial state that loads the resources (the render section gives back a loading screen). After it finishes loading the resources, it asks the manager to pop itself from the stack and then push on the main menu state. The menu state is quite simple: It displays several options where each option either does something immediate (like popping the menu from the stack to end the program in the case of the Quit option) or something more complex (like pushing a sub-menu state on to create a new game with some settings). After that there are states for playing the game, viewing the high scores, etc.

The main awesome thing about this structure is that switching states is so easy. Want to pause the game? Just push on a state that doesn't do anything until it receives a key input or something, after which it pops itself. Feel like doing a complex menu system? The stack keeps track of the "breadcrumbs" so to speak. Never before have I been able to actually create an easily extensible/modification-friendly game menu/management system that doesn't leave me with a headache later trying to understand it or unravel my spaghetti code.

The second awesome thing is that this helps encourage an inversion of control. Rather than the state itself dictating when it gets input or when it renders something, the program on top of it can choose whether it wants to render that state or whether it wants to give input to that state. I ended up using the render function like a game loop function as well and so if I wanted a state to sort of "pause" execution, I could simply stop telling it to render (usually by just pushing a state on top of it). My main program (in Python) was about 25 lines long and took care of setting up the window, starting the game states, and then just injecting input into the game state and asking the state to render a window. The states themselves could choose how they wanted to render the window or even if they wanted to (that gets into the event bubbling section which is next). It is so much easier to operate that way as the components are loosely tied to one another and there is a clear relationship between them. The program owns the states and the states know nothing about about the program driving them...only that their input comes from somewhere and the renderings they are occasionally asked to do go somewhere.

Event bubbling

When making my Tetris game, I wanted an easy to way to know when to update or redraw the screen rather than always redrawing it. My idea was to create an event system that would "notice" when the game changed somehow: whether a block had moved or maybe the score changed. I remembered of two event systems that I really really liked: C#'s event keyword plus delegate thing and Javascript's event bubbling system. Some people probably hate both of those, but I have learned to love them. Mainly, its just because I'm new to this sort of thing and am still being dazzled by their plethora of uses. Using some knowledge I gleaned from my database abstraction project about the fun data model special function names in Python, I created the following:

1class EventDispatcher(object):

2 """

3 Event object which operates like C# events

4

5 "event += handler" adds a handler to this event

6 "event -= handler" removes a handler from this event

7 "event(obj)" calls all of the handlers, passing the passed object

8

9 Events may be temporarily suppressed by using them in a with

10 statement. The context returned will be this event object.

11 """

12 def __init__(self):

13 """

14 Initializes a new event

15 """

16 self.__handlers = []

17 self.__supress_count = 0

18 def __call__(self, e):

19 if self.__supress_count > 0:

20 return

21 for h in self.__handlers:

22 h(e)

23 def __iadd__(self, other):

24 if other not in self.__handlers:

25 self.__handlers.append(other)

26 return self

27 def __isub__(self, other):

28 self.__handlers.remove(other)

29 return self

30 def __enter__(self):

31 self.__supress_count += 1

32 return self

33 def __exit__(self, exc_type, exc_value, traceback):

34 self.__supress_count -= 1

My first thought when making this was to do it like C# events: super flexible, but no defined "way" to do them. The event dispatcher uses the same syntax as the C# events (+=, -= or __iadd__, __isub__) to add/remove handlers and also to call each handler (() or __call__). It also has the added functionality of optionally suppressing events by using them inside a "with" statement (which may actually be breaking the pattern, but I needed it to avoid some interesting redrawing issues). I would add EventDispatcher objects to represent each type of event that I wanted to catch and then pass an Event object into the event to send it off to the listening functions. However, I ran into an issue with this: Although I was able to cut down the number of events being sent and how far they propagated, I would occasionally lose events. The issue this caused is that my renderer was listening to find out where it should "erase" blocks and where it should "add" blocks and it would sometimes seem to forget to erase some of the blocks. I later discovered that I had simply forgotten to call the event during a certain function which was called periodically to move the Tetris blocks down, but even so, it got my started on the next thing which I feel is better.

Javascript events work by specifying types of events, a "target" or object in the focus of the event, and arguments that get passed along with the event. The events then "bubble" upward through the DOM, firing first for a child and then for the parent of that child until it reaches the top element. The advantage of this is that if one wants to know, for example, if the screen has been clicked, a listener doesn't have to listen at the lowest leaf element of each branch of the DOM; it can simply listen at the top element and wait for the "click" event to "bubble" upwards through the tree. After my aforementioned issue I initially thought that I was missing events because my structure was flawed, so I ended up re-using the above class to implement event bubbling by doing the following:

1class Event(object):

2 """

3 Instance of an event to be dispatched

4 """

5 def __init__(self, target, name, *args, **kwargs):

6 self.target = target

7 self.name = name

8 self.args = args

9 self.kwargs = kwargs

10

11class EventedObject(object):

12 def __init__(self, parent=None):

13 self.__parent = parent

14 self.event = EventDispatcher()

15 self.event += self.__on_event

16 def __on_event(self, e):

17 if hasattr(self.__parent, 'event'):

18 self.__parent.event(e)

19 @property

20 def parent(self):

21 return self.__parent

22 @parent.setter

23 def parent(self, value):

24 l = self.parent

25 self.__parent = value

26 self.event(Event(self, "parent-changed", current=self.parent, last=l))

The example here is the object from which all of my moving game objects (blocks, polyominoes, the game grid, etc) derive from. It defines a single EventDispatcher, through which Event objects are passed. It listens to its own event and when it hears something, it activates its parent's event, passing through the same object that it received. The advantage here is that by listening to just one "top" object, all of the events that occurred for the child objects are passed to whatever handler is attached to the top object's dispatcher. In my specific implementation I had each block send an Event up the pipeline when the were moved, each polyomino send an Event when it was moved or rotated, and the game send an Event when the score, level, or line count was changed. By having my renderer listen to just the game object's EventDispatcher I was able to intercept all of these events at one location.

The disadvantage with this particular method is that each movement has a potentially high computational cost. All of my events are synchronous since Python doesn't do true multithreading and I didn't need a high performance implementation. It's just method calls, but there is a potential for a stack overflow if either a chain of parents has a loop somewhere or if I simply have too tall of an object tree. If I attach too many listeners to a single EventDispatcher, it will also slow things down.

Another problem I have here has to do with memory leaks which I believe I have (I haven't tested it and this is entirely in my head, thinking about the issues). Since I am asking the EventDispatcher to add a handler which is a bound method to the object which owns it, there is a loop there. In the event that I forget all references to the EventedObject, the reference count will never decrease to 0 since the EventDispatcher inside the EventedObject still holds a reference to that same EventedObject inside the bound method. I would think that this could cause garbage collection to never happen. Of course, they could make the garbage collector really smart and notice that the dependency tree here is a nice orphan tree detached from the rest and can all be collected. However, if it is a dumb garbage collector, it will probably keep it around. This isn't a new issue for me: I ran into it with doing something like this on one of my C# projects. However, the way I solved it there was to implement the IDisposable interface and upon disposal, unsubscribe from all events that created a circular dependency. The problem there was worse because there wasn't a direct exclusive link between the two objects like there is with this one (here one is a property of the other (strong link) and the other only references a bound method to its partner (weak...kinda...link)).

Overall, even though there are those disadvantages, I feel that the advantage gained by having all the events in one place is worth it. In the future I will create "filters" that can be attached to handlers as they are subscribed to avoid calling the handlers for events that don't match their filter. This is similar to Javascript in that handlers can be used to catch one specific type of event. However, mine differs in that in the spirit of Python duck typing, I decided to make no distinction between types of events outside of a string name that identifies what it is. Since I only had one EventDispatcher per object, it makes sense to only have one type of event that will be fed to its listeners. The individual events can then just be differentiated by a property value. While this feels flaky to me since I usually feel most comfortable with strong typing systems, it seems to be closer to what Python is trying to do.

Conclusion

I eventually will put up this Tetris implementation as a gist or repository on github (probably just a gist unless it gets huge...which it could). So far I have learned a great deal about game design and structure, so this should get interesting as I explore other things like networking and such.

Database Abstraction in Python

As I was recently working on trying out the Flask web framework for Python, I ended up wanting to access my MySQL database. Recently at work I have been using entity framework and I have gotten quite used to having a good database abstraction that allows programmatic creation of SQL. While such frameworks exist in Python, I thought it would interesting to try writing one. This is one great example of getting carried away with a seemingly simple task.

I aimed for these things:

- Tables should be represented as objects which each instance of the object representing a row

- These objects should be able to generate their own insert, select, and update queries

- Querying the database should be accomplished by logical predicates, not by strings

- Update queries should be optimized to only update those fields which have changed

- The database objects should have support for "immutable" fields that are generated by the database

I also wanted to be able to do relations between tables with foreign keys, but I have decided to stop for now on that. I have a structure outlined, but it isn't necessary enough at this point since all I wanted was a database abstraction for my simple Flask project. I will probably implement it later.

This can be found as a gist here: https://gist.github.com/kcuzner/5246020

Example

Before going into the code, here is an example of what this abstraction can do as it stands. It directly uses the DbObject and DbQuery-inheriting objects which are shown further down in this post.

1from db import *

2import hashlib

3

4def salt_password(user, unsalted):

5 if user is None:

6 return unsalted

7 m = hashlib.sha512()

8 m.update(user.username)

9 m.update(unsalted)

10 return m.hexdigest()

11

12class User(DbObject):

13 dbo_tablename = "users"

14 primary_key = IntColumn("id", allow_none=True, mutable=False)

15 username = StringColumn("username", "")

16 password = PasswordColumn("password", salt_password, "")

17 display_name = StringColumn("display_name", "")

18 def __init__(self, **kwargs):

19 DbObject.__init__(self, **kwargs)

20 @classmethod

21 def load(self, cur, username):

22 selection = self.select('u')

23 selection[0].where(selection[1].username == username)

24 result = selection[0].execute(cur)

25 if len(result) == 0:

26 return None

27 else:

28 return result[0]

29 def match_password(self, password):

30 salted = salt_password(self, password)

31 return salted == self.password

32

33#assume there is a function get_db defined which returns a PEP-249

34#database object

35def main():

36 db = get_db()

37 cur = db.cursor()

38 user = User.load(cur, "some username")

39 user.password = "a new password!"

40 user.save(cur)

41 db.commit()

42

43 new_user = User(username="someone", display_name="Their name")

44 new_user.password = "A password that will be hashed"

45 new_user.save(cur)

46 db.commmit()

47

48 print new_user.primary_key # this will now have a database assigned id

This example first loads a user using a DbSelectQuery. The user is then modified and the DbObject-level function save() is used to save it. Next, a new user is created and saved using the same function. After saving, the primary key will have been populated and will be printed.

Change Tracking Columns

I started out with columns. I needed columns that track changes and have a mapping to an SQL column name. I came up with the following:

1class ColumnSet(object):

2 """

3 Object which is updated by ColumnInstances to inform changes

4 """

5 def __init__(self):

6 self.__columns = {} # columns are sorted by name

7 i_dict = type(self).__dict__

8 for attr in i_dict:

9 obj = i_dict[attr]

10 if isinstance(obj, Column):

11 # we get an instance of this column

12 self.__columns[obj.name] = ColumnInstance(obj, self)

13

14 @property

15 def mutated(self):

16 """

17 Returns the mutated columns for this tracker.

18 """

19 output = []

20 for name in self.__columns:

21 column = self.get_column(name)

22 if column.mutated:

23 output.append(column)

24 return output

25

26 def get_column(self, name):

27 return self.__columns[name]

28

29class ColumnInstance(object):

30 """

31 Per-instance column data. This is used in ColumnSet objects to hold data

32 specific to that particular instance

33 """

34 def __init__(self, column, owner):

35 """

36 column: Column object this is created for

37 initial: Initial value

38 """

39 self.__column = column

40 self.__owner = owner

41 self.update(column.default)

42

43 def update(self, value):

44 """

45 Updates the value for this instance, resetting the mutated flag

46 """

47 if value is None and not self.__column.allow_none:

48 raise ValueError("'None' is invalid for column '" +

49 self.__column.name + "'")

50 if self.__column.validate(value):

51 self.__value = value

52 self.__origvalue = value

53 else:

54 raise ValueError("'" + str(value) + "' is not valid for column '" +

55 self.__column.name + "'")

56

57 @property

58 def column(self):

59 return self.__column

60

61 @property

62 def owner(self):

63 return self.__owner

64

65 @property

66 def mutated(self):

67 return self.__value != self.__origvalue

68

69 @property

70 def value(self):

71 return self.__value

72

73 @value.setter

74 def value(self, value):

75 if value is None and not self.__column.allow_none:

76 raise ValueError("'None' is invalid for column '" +

77 self.__column.name + "'")

78 if not self.__column.mutable:

79 raise AttributeError("Column '" + self.__column.name + "' is not" +

80 " mutable")

81 if self.__column.validate(value):

82 self.__value = value

83 else:

84 raise ValueError("'" + value + "' is not valid for column '" +

85 self.__column.name + "'")

86

87class Column(object):

88 """

89 Column descriptor for a column

90 """

91 def __init__(self, name, default=None, allow_none=False, mutable=True):

92 """

93 Initializes a column

94

95 name: Name of the column this maps to

96 default: Default value

97 allow_none: Whether none (db null) values are allowed

98 mutable: Whether this can be mutated by a setter

99 """

100 self.__name = name

101 self.__allow_none = allow_none

102 self.__mutable = mutable

103 self.__default = default

104

105 def validate(self, value):

106 """

107 In a child class, this will validate values being set

108 """

109 raise NotImplementedError

110

111 @property

112 def name(self):

113 return self.__name

114

115 @property

116 def allow_none(self):

117 return self.__allow_none

118

119 @property

120 def mutable(self):

121 return self.__mutable

122

123 @property

124 def default(self):

125 return self.__default

126

127 def __get__(self, owner, ownertype=None):

128 """

129 Gets the value for this column for the passed owner

130 """

131 if owner is None:

132 return self

133 if not isinstance(owner, ColumnSet):

134 raise TypeError("Columns are only allowed on ColumnSets")

135 return owner.get_column(self.name).value

136

137 def __set__(self, owner, value):

138 """

139 Sets the value for this column for the passed owner

140 """

141 if not isinstance(owner, ColumnSet):

142 raise TypeError("Columns are only allowed on ColumnSets")

143 owner.get_column(self.name).value = value

144

145class StringColumn(Column):

146 def validate(self, value):

147 if value is None and self.allow_none:

148 print "nonevalue"

149 return True

150 if isinstance(value, basestring):

151 print "isstr"

152 return True

153 print "not string", value, type(value)

154 return False

155

156class IntColumn(Column):

157 def validate(self, value):

158 if value is None and self.allow_none:

159 return True

160 if isinstance(value, int) or isinstance(value, long):

161 return True

162 return False

163

164class PasswordColumn(Column):

165 def __init__(self, name, salt_function, default=None, allow_none=False,

166 mutable=True):

167 """

168 Create a new password column which uses the specified salt function

169

170 salt_function: a function(self, value) which returns the salted string

171 """

172 Column.__init__(self, name, default, allow_none, mutable)

173 self.__salt_function = salt_function

174 def validate(self, value):

175 return True

176 def __set__(self, owner, value):

177 salted = self.__salt_function(owner, value)

178 super(PasswordColumn, self).__set__(owner, salted)

The Column class describes the column and is implemented as a descriptor. Each ColumnSet instance contains multiple columns and holds ColumnInstance objects which hold the individual column per-object properties, such as the value and whether it has been mutated or not. Each column type has a validation function to help screen invalid data from the columns. When a ColumnSet is initiated, it scans itself for columns and at that moment creates its ColumnInstances.

Generation of SQL using logical predicates

The next thing I had to create was the database querying structure. I decided that rather than actually using the ColumnInstance or Column objects, I would use a go-between object that can be assigned a "prefix". A common thing to do in SQL queries is to rename the tables in the query so that you can reference the same table multiple times or use different tables with the same column names. So, for example if I had a table called posts and I also had a table called users and they both shared a column called 'last_update', I could assign a prefix 'p' to the post columns and a prefix 'u' to the user columns so that the final column name would be 'p.last_update' and 'u.last_update' for posts and users respectively.

Another thing I wanted to do was avoid the usage of SQL in constructing my queries. This is similar to the way that LINQ works for C#: A predicate is specified and later translated into an SQL query or a series of operations in memory depending on what is going on. So, in Python one of my queries looks like so:

1class Table(ColumnSet):

2 some_column = StringColumn("column_1", "")

3 another = IntColumn("column_2", 0)

4a_variable = 5

5columns = Table.get_columns('x') # columns with a prefix 'x'

6query = DbQuery() # This base class just makes a where statement

7query.where((columns.some_column == "4") & (columns.another > a_variable)

8print query.sql

This would print out a tuple (" WHERE x.column_1 = %s AND x.column_2 > %s", ["4", 5]). So, how does this work? I used operator overloading to create DbQueryExpression objects. The code is like so:

1class DbQueryExpression(object):

2 """

3 Query expression created from columns, literals, and operators

4 """

5 def __and__(self, other):

6 return DbQueryConjunction(self, other)

7 def __or__(self, other):

8 return DbQueryDisjunction(self, other)

9

10 def __str__(self):

11 raise NotImplementedError

12 @property

13 def arguments(self):

14 raise NotImplementedError

15

16class DbQueryConjunction(DbQueryExpression):

17 """

18 Query expression joining together a left and right expression with an

19 AND statement

20 """

21 def __init__(self, l, r):

22 DbQueryExpression.__ini__(self)

23 self.l = l

24 self.r = r

25 def __str__(self):

26 return str(self.l) + " AND " + str(self.r)

27 @property

28 def arguments(self):

29 return self.l.arguments + self.r.arguments

30

31class DbQueryDisjunction(DbQueryExpression):

32 """

33 Query expression joining together a left and right expression with an

34 OR statement

35 """

36 def __init__(self, l, r):

37 DbQueryExpression.__init__(self)

38 self.l = l

39 self.r = r

40 def __str__(self):

41 return str(self.r) + " OR " + str(self.r)

42 @property

43 def arguments(self):

44 return self.l.arguments + self.r.arguments

45

46class DbQueryColumnComparison(DbQueryExpression):

47 """

48 Query expression comparing a combination of a column and/or a value

49 """

50 def __init__(self, l, op, r):

51 DbQueryExpression.__init__(self)

52 self.l = l

53 self.op = op

54 self.r = r

55 def __str__(self):

56 output = ""

57 if isinstance(self.l, DbQueryColumn):

58 prefix = self.l.prefix

59 if prefix is not None:

60 output += prefix + "."

61 output += self.l.name

62 elif self.l is None:

63 output += "NULL"

64 else:

65 output += "%s"

66 output += self.op

67 if isinstance(self.r, DbQueryColumn):

68 prefix = self.r.prefix

69 if prefix is not None:

70 output += prefix + "."

71 output += self.r.name

72 elif self.r is None:

73 output += "NULL"

74 else:

75 output += "%s"

76 return output

77 @property

78 def arguments(self):

79 output = []

80 if not isinstance(self.l, DbQueryColumn) and self.l is not None:

81 output.append(self.l)

82 if not isinstance(self.r, DbQueryColumn) and self.r is not None:

83 output.append(self.r)

84 return output

85

86class DbQueryColumnSet(object):

87 """

88 Represents a set of columns attached to a specific DbOject type. This

89 object dynamically builds itself based on a passed type. The columns

90 attached to this set may be used in DbQueries

91 """

92 def __init__(self, dbo_type, prefix):

93 d = dbo_type.__dict__

94 self.__columns = {}

95 for attr in d:

96 obj = d[attr]

97 if isinstance(obj, Column):

98 column = DbQueryColumn(dbo_type, prefix, obj.name)

99 setattr(self, attr, column)

100 self.__columns[obj.name] = column

101 def __len__(self):

102 return len(self.__columns)

103 def __getitem__(self, key):

104 return self.__columns[key]

105 def __iter__(self):

106 return iter(self.__columns)

107

108class DbQueryColumn(object):

109 """

110 Represents a Column object used in a DbQuery

111 """

112 def __init__(self, dbo_type, prefix, column_name):

113 self.dbo_type = dbo_type

114 self.name = column_name

115 self.prefix = prefix

116

117 def __lt__(self, other):

118 return DbQueryColumnComparison(self, "<", other)

119 def __le__(self, other):

120 return DbQueryColumnComparison(self, "<=", other)

121 def __eq__(self, other):

122 op = "="

123 if other is None:

124 op = " IS "

125 return DbQueryColumnComparison(self, op, other)

126 def __ne__(self, other):

127 op = "!="

128 if other is None:

129 op = " IS NOT "

130 return DbQueryColumnComparison(self, op, other)

131 def __gt__(self, other):

132 return DbQueryColumnComparison(self, ">", other)

133 def __ge__(self, other):

134 return DbQueryColumnComparison(self, ">=", other)

The __str__ function and arguments property return recursively generated expressions using the column prefixes (in the case of __str__) and the arguments (in the case of arguments). As can be seen, this supports parameterization of queries. To be honest, this part was the most fun since I was surprised it was so easy to make predicate expressions using a minimum of classes. One thing that I didn't like, however, was the fact that the boolean and/or operators cannot be overloaded. For that reason I had to use the bitwise operators, so the expressions aren't entirely correct when being read.

This DbQueryExpression is fed into my DbQuery object which actually does the translation to SQL. In the example above, we saw that I just passed a logical argument into my where function. This actually was a DbQueryExpression since my overloaded operators create DbQueryExpression objects when they are compared. The DbColumnSet object is an dynamically generated object containing the go-between column objects which is created from a DbObject. We will discuss the DbObject a little further down

The DbQuery objects are implemented as follows:

1class DbQueryError(Exception):

2 """

3 Raised when there is an error constructing a query

4 """

5 def __init__(self, msg):

6 self.message = msg

7 def __str__(self):

8 return self.message

9

10class DbQuery(object):

11 """

12 Represents a base SQL Query to a database based upon some DbObjects

13

14 All of the methods implemented here are valid on select, update, and

15 delete statements.

16 """

17 def __init__(self, execute_filter=None):

18 """

19 callback: Function to call when the DbQuery is executed

20 """

21 self.__where = []

22 self.__limit = None

23 self.__orderby = []

24 self.__execute_filter = execute_filter

25 def where(self, expression):

26 """Specify an expression to append to the WHERE clause"""

27 self.__where.append(expression)

28 def limit(self, value=None):

29 """Specify the limit to the query"""

30 self.__limit = value

31 @property

32 def sql(self):

33 query = ""

34 args = []

35 if len(self.__where) > 0:

36 where = self.__where[0]

37 for clause in self.__where[1:]:

38 where = where & clause

39 args = where.arguments

40 query += " WHERE " + str(where)

41 if self.__limit is not None:

42 query += " LIMIT " + self.__limit

43 return query,args

44 def execute(self, cur):

45 """

46 Executes this query on the passed cursor and returns either the result

47 of the filter function or the cursor if there is no filter function.

48 """

49 query = self.sql

50 cur.execute(query[0], query[1])

51 if self.__execute_filter:

52 return self.__execute_filter(self, cur)

53 else:

54 return cur

55

56class DbSelectQuery(DbQuery):

57 """

58 Creates a select query to a database based upon DbObjects

59 """

60 def __init__(self, execute_filter=None):

61 DbQuery.__init__(self, execute_filter)

62 self.__select = []

63 self.__froms = []

64 self.__joins = []

65 self.__orderby = []

66 def select(self, *columns):

67 """Specify one or more columns to select"""

68 self.__select += columns

69 def from_table(self, dbo_type, prefix):

70 """Specify a table to select from"""

71 self.__froms.append((dbo_type, prefix))

72 def join(self, dbo_type, prefix, on):

73 """Specify a table to join to"""

74 self.__joins.append((dbo_type, prefix, on))

75 def orderby(self, *columns):

76 """Specify one or more columns to order by"""

77 self.__orderby += columns

78 @property

79 def sql(self):

80 query = "SELECT "

81 args = []

82 if len(self.__select) == 0:

83 raise DbQueryError("No selection in DbSelectQuery")

84 query += ','.join([col.prefix + "." +

85 col.name for col in self.__select])

86 if len(self.__froms) == 0:

87 raise DbQueryError("No FROM clause in DbSelectQuery")

88 for table in self.__froms:

89 query += " FROM " + table[0].dbo_tablename + " " + table[1]

90 if len(self.__joins) > 0:

91 for join in self.__joins:

92 query += " JOIN " + join[0].dbo_tablename + " " + join[1] +

93 " ON " + str(join[2])

94 query_parent = super(DbSelectQuery, self).sql

95 query += query_parent[0]

96 args += query_parent[1]

97 if len(self.__orderby) > 0:

98 query += " ORDER BY " +

99 ','.join([col.prefix + "." +

100 col.name for col in self.__orderby])

101 return query,args

102

103class DbInsertQuery(DbQuery):

104 """

105 Creates an insert query to a database based upon DbObjects. This does not

106 include any where or limit expressions

107 """

108 def __init__(self, dbo_type, prefix, execute_filter=None):

109 DbQuery.__init__(self, execute_filter)

110 self.table = (dbo_type, prefix)

111 self.__values = []